LLM学术研究开发指南:2026年从数学到实践全攻略

This guide outlines the essential knowledge areas for LLM academic research and development, including mathematics (linear algebra, calculus, probability, convex optimization), programming languages (Python, C/C++), frameworks (PyTorch, TensorFlow, etc.), common models (MLP, CNN, RNN, Transformer variants), and LLM-specific techniques (prompt engineering, RAG, fine-tuning). It emphasizes practical learning through hands-on implementation and leveraging AI tools.

原文翻译: 本指南概述了进行LLM学术研究与开发所需的核心知识领域,包括数学(线性代数、高等数学、概率论、凸优化)、编程语言(Python、C/C++)、框架(PyTorch、TensorFlow等)、常用模型(MLP、CNN、RNN、Transformer变体)以及LLM特定技术(提示工程、RAG、微调)。它强调通过动手实践和利用AI工具进行实用学习。

Introduction

很明显,这是一个偏学术方向的指南要求,所以我会把整个LLM应用的从数学到编程语言,从框架到常用模型的学习方法,给你捋一个通透。也可能是不爱学习的劝退文。

This is clearly a guide with an academic orientation. Therefore, I will provide a thorough breakdown of the learning path for LLM applications, covering everything from mathematics and programming languages to frameworks and commonly used models. It might also serve as a deterrent for those who dislike studying.

通常要达到熟练的进行LLM相关的学术研究与开发,至少你要准备 数学、编码、常用模型的知识,还有LLM相关的知识的准备。只有这些都熟练了,你才能快速阅读相关研究方向的论文,并且判断自己是不是可以在这个方向挖一下。

To become proficient in LLM-related academic research and development, you typically need to prepare knowledge in mathematics, coding, common models, and LLM-specific topics. Only after mastering these areas can you quickly read papers in related research fields and determine whether you can explore a particular direction.

TL;DR

Core Requirements Summary

- 数学知识:线性代数、高数、概率、凸优化 (Mathematics: Linear Algebra, Advanced Mathematics, Probability, Convex Optimization)

- 开发语言:Python, C/C++ (Programming Languages: Python, C/C++)

- 开发框架:Numpy/Pytorch/Tensorflow/Keras/Onnx (Development Frameworks: Numpy/Pytorch/Tensorflow/Keras/Onnx)

- 常用模型:MLP、CNN、RNN、Transformer(GPT-2、RWKV、Mamba、TTT) (Common Models: MLP, CNN, RNN, Transformer (e.g., GPT-2, RWKV, Mamba, TTT))

- LLM相关:Prompt各种理论框架,RAG各种技术,FineTune的几种方法 (LLM-specific: Various Prompting frameworks, RAG techniques, and Fine-tuning methods)

好了,开始正式的劝退版吧。

Alright, let's begin the formal "deterrence" version.

The Foundational Pillar: Mathematics

Mathematics is the Foundation, But It Might Not Be a Major Issue for Graduate Students

通常数学对于毕业后的人来讲,需要简单的看一下,对于一个研究生一年级的人来讲不是问题。毕竟线性代数、高数、概率都是必考。只有凸优化这东西,可能是门需要自己再看一下的课程。

For those who have already graduated, mathematics might require a brief review. However, for a first-year graduate student, it's usually not a problem since Linear Algebra, Advanced Mathematics, and Probability are standard required courses. Convex Optimization might be the one topic that requires additional self-study.

Linear Algebra: Key concepts include vectors, matrices, eigenvalues, and eigenvectors. Important formulas involve matrix multiplication, determinants, and the eigenvalue equation Av=λv, where A is a matrix, v is an eigenvector, and λ is the eigenvalue.

线性代数:关键概念包括向量、矩阵、特征值和特征向量。重要的公式涉及矩阵乘法、行列式以及特征值方程Av=λv,其中 A是矩阵,v 是特征向量,λ是特征值。

Advanced Mathematics: This is essentially differential and integral calculus, with a focus on understanding the concepts of limits, derivatives, and integrals. The derivative of a function f(x) at point x is given by f′(x)=limh→0 (f(x+h)−f(x))/h. The Fundamental Theorem of Calculus links differentiation and integration.

高数:基本是微分和积分,重点是理解极限、导数和积分的概念。函数 f(x) 在点 x的导数由f′(x)=limh→0 (f(x+h)−f(x))/h 给出,基本微积分定理将微分与积分联系起来。

Probability: Key points include probability axioms, conditional probability, Bayes' Theorem, random variables, and distributions. For example, Bayes' Theorem is given by P(A∣B)=P(B∣A)P(A)/P(B), which helps update the probability of A given that B has occurred.

概率:关键点包括概率公理、条件概率、贝叶斯定理、随机变量和分布。例如,贝叶斯定理由P(A∣B)=P(B∣A)P(A)/P(B)给出,它帮助在发生B 的情况下更新 A 的概率。

Convex Optimization: This focuses on problems where the objective function is convex. Key concepts include convex sets, convex functions, gradient descent, and Lagrange multipliers. The gradient descent update rule can be expressed as x_{n+1} = x_n − α∇f(x_n), where α is the learning rate. You might need to put in some extra effort here.

凸优化:关注目标函数为凸函数的问题。关键概念包括凸集、凸函数、梯度下降和拉格朗日乘数。梯度下降更新规则可以表示为 x_{n+1} = x_n − α∇f(x_n),其中 α是学习率。可能你需要在此努力一下。

The Practical Toolkit: Programming

Coding: From Time-Consuming to AI-Assisted Development

原来编码我要写一堆的,但是最近的AI告诉我,Cursor或者任意的AI大模型都可以指导你完成基本的编码工作了。

I used to write extensively about coding, but recent AI developments have shown that tools like Cursor or any capable large language model can guide you through basic coding tasks.

所以你只需要知道,自己需要下面这些知识就好了。

Therefore, you just need to be aware that you require the following knowledge.

- 核心开发语言要掌握Python、C/C++。 如果你有更强烈的意愿,可以再去研究一下CUDA相关的知识。 (Core programming languages to master are Python and C/C++. If you have a stronger inclination, you can further explore CUDA-related knowledge.)

- Numpy 主要是掌握各种数据的使用方法。 (Numpy is primarily for mastering methods of handling various data structures.)

- Pytorch 与 Tensor、 Keras 就是完成各种网络及训练的方法。 Onnx就是有些模型是基于它的发布,你要会使用它来运行及分析这个模型。 (Pytorch, TensorFlow, and Keras are for building various networks and conducting training. Onnx is a format some models are released in; you need to know how to use it to run and analyze such models.)

但这些其实只需要你会问AI大模型就好了。

But in reality, knowing how to ask the right questions to a large AI model is often sufficient for these tasks.

The Evolutionary Landscape: From Classic to Cutting-Edge Models

Common Models: Understanding the Past to Grasp Future Breakthroughs

MLP、CNN、RNN的典型模型你可能要相对熟悉一点,我建议你自己手写一下。

You should be relatively familiar with typical MLP, CNN, and RNN models. I recommend implementing them from scratch.

建议是这些网络

The recommended networks are:

- LeNet-5: 这是最早的卷积神经网络之一。 (LeNet-5: One of the earliest convolutional neural networks.)

- AlexNet: AlexNet在ImageNet图像分类竞赛中表现优异,标志着深度学习的广泛应用。 (AlexNet: Its excellent performance in the ImageNet image classification competition marked the widespread adoption of deep learning.)

- VGGNet: VGGNet以其深度和使用的小卷积核(3x3)而闻名,常用的模型有VGG16和VGG19。 (VGGNet: Known for its depth and use of small (3x3) convolutional kernels. Commonly used versions are VGG16 and VGG19.)

- ResNet (Residual Networks): ResNet通过引入残差连接解决了深度网络中的梯度消失问题,最著名的版本是ResNet-50、ResNet-101。 (ResNet (Residual Networks): Solved the vanishing gradient problem in deep networks by introducing residual connections. The most famous versions are ResNet-50 and ResNet-101.)

- Long Short-Term Memory (LSTM):LSTM通过引入门控机制解决了标准RNN中的长期依赖问题,是处理序列数据的标准模型之一。 (Long Short-Term Memory (LSTM): Solved the long-term dependency problem in standard RNNs by introducing gating mechanisms, becoming a standard model for sequence data.)

- Gated Recurrent Unit (GRU): GRU是LSTM的简化版本,具有类似的性能但计算效率更高。 (Gated Recurrent Unit (GRU): A simplified version of LSTM with similar performance but higher computational efficiency.)

- Bidirectional RNN: 这是RNN的一种变体,可以同时考虑序列中前后文信息,通常用于自然语言处理任务。 (Bidirectional RNN: A variant of RNN that can consider both past and future context in a sequence, commonly used in NLP tasks.)

而新一些架构,可能你要看RWKV、Mamba、TTT这三个新架构,它们的潜力还是不错的。

As for newer architectures, you should look into RWKV, Mamba, and TTT. These three novel architectures show considerable promise.

The Heart of the Matter: Large Language Models (LLMs)

LLM-Related Knowledge

你的目标是这个,其实现在所有做人工智能的基本上都集中在这儿了。而且在卷这样简单的一个架构的各个方面:

This is your ultimate target. In fact, almost everyone working in AI is now focused here, intensely exploring every aspect of this seemingly simple architecture:

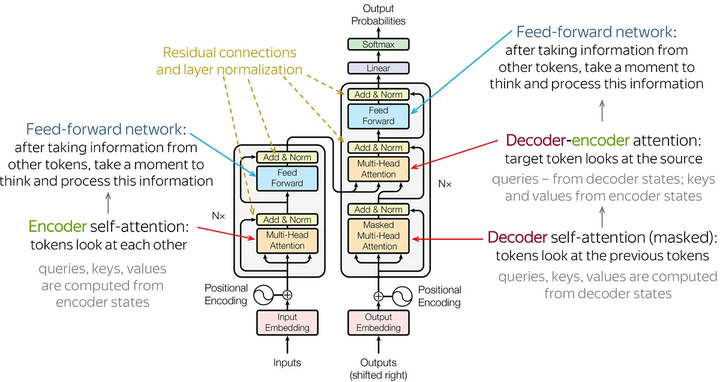

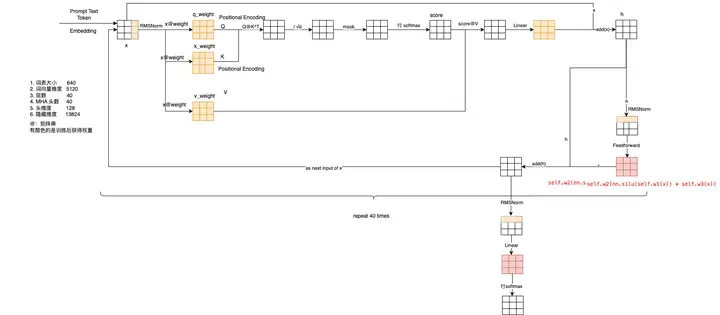

推荐自己手写一个 Transformer 模型,至少要写一个 Attention 的结构。还要看懂下面这个图。你就能体会到一个至简的模型是怎么遵循 Scaling Law的,AGI 可能就在这个简单的重复与变大中了!

I recommend implementing a Transformer model from scratch, or at least coding the Attention mechanism. You also need to understand the following diagram. This will help you appreciate how an extremely simple model adheres to Scaling Laws. AGI might very well emerge from this simple repetition and scaling up!

当然了,一定要用数据跑个训练。GPT-2的就有非常不错的示范了。

Of course, you must run a training process with real data. GPT-2 provides an excellent example for this.

如果你能顺利完成到这儿,我想你的水平,混个论文,搞到研究生毕业在大部分院校应该不是大问题了。如果是TOP几的。。。你自己再想一下吧。

If you can successfully complete everything up to this point, I believe your skill level should be sufficient to produce a thesis and graduate from a Master's program at most institutions. For top-tier schools... you might need to think about that yourself.

The Accelerated Path: Structured Learning

但是,如果你觉得这些难?想找个效率更高,难度更简单的。那我建议你听个课吧。毕竟,课程是一个相对体系化,而且有人不断的能讲解且解决你的疑问的手段。相当于用钱买了你的时间与知识。

However, if you find this path difficult and seek a more efficient, simpler approach, then I suggest taking a course. After all, a course provides a relatively systematic structure and offers continuous explanation and resolution of your doubts. It's essentially trading money for time and knowledge.

不过好在不是马上花钱,你可以先听一下试听课。在这门课里详细的讲解了Transformer里的Attention是什么。各种细节什么的。还有FineTune需要什么样的资源,怎么搞完它。

The good news is you don't have to pay immediately; you can start with a trial lesson. This course explains in detail what the Attention mechanism in Transformer is, covering various nuances. It also covers the resources needed for Fine-tuning and how to complete the process.

可以说,听完课后,我发现原来好多小细节,我是没有注意过的。但是听完后,对这些都感到非常清晰了。

I can say that after taking the course, I realized there were many subtle details I had previously overlooked. Afterward, everything became much clearer.

而且我想说,它可以用一两个月解决你一两年的问题。

Moreover, I want to emphasize that it can solve in one or two months problems that might otherwise take you one or two years.

学习这事吧,一要名师解决一个快速与准确的路径。二要答案,解决你的任何问题。它都能做到。

When it comes to learning, two things are crucial: first, a master teacher to provide a fast and accurate path; second, answers to solve any of your problems. This course delivers on both fronts.

常见问题(FAQ)

LLM学术研究需要掌握哪些核心数学知识?

需要掌握线性代数、高等数学、概率论和凸优化。其中凸优化可能需要额外学习,其他对研究生通常是必修内容。

进行LLM开发推荐使用哪些编程语言和框架?

主要使用Python和C/C++语言,配合PyTorch、TensorFlow等框架。现代开发可借助AI辅助工具提高效率。

学习LLM需要了解哪些关键模型和技术?

需掌握MLP、CNN、RNN、Transformer等基础模型,以及提示工程、RAG检索增强和微调等LLM专项技术。

版权与免责声明:本文仅用于信息分享与交流,不构成任何形式的法律、投资、医疗或其他专业建议,也不构成对任何结果的承诺或保证。

文中提及的商标、品牌、Logo、产品名称及相关图片/素材,其权利归各自合法权利人所有。本站内容可能基于公开资料整理,亦可能使用 AI 辅助生成或润色;我们尽力确保准确与合规,但不保证完整性、时效性与适用性,请读者自行甄别并以官方信息为准。

若本文内容或素材涉嫌侵权、隐私不当或存在错误,请相关权利人/当事人联系本站,我们将及时核实并采取删除、修正或下架等处理措施。 也请勿在评论或联系信息中提交身份证号、手机号、住址等个人敏感信息。