Cognee框架如何构建AI知识记忆系统?2026年深度解析

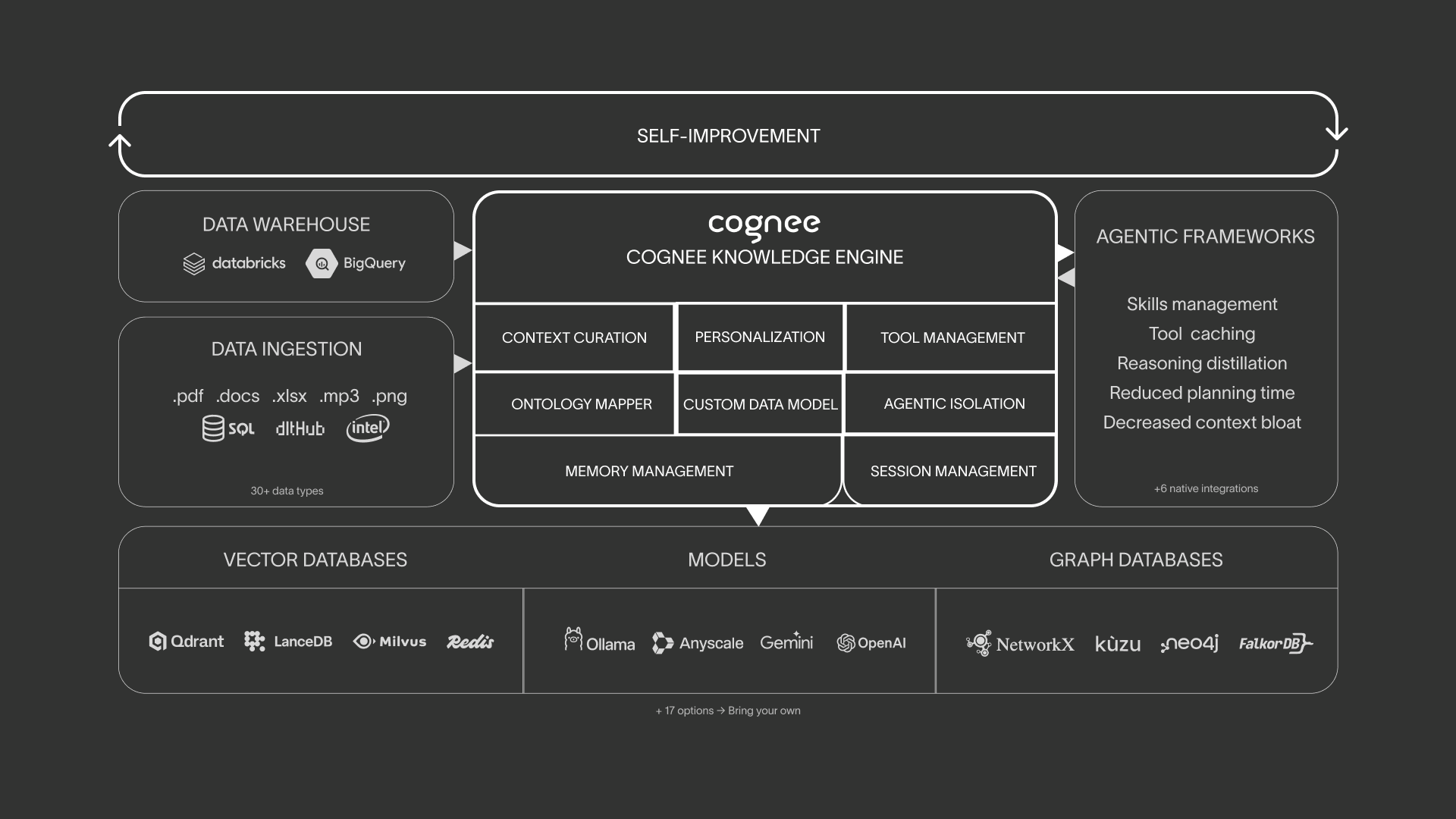

Cognee is an open-source Python framework that builds persistent, dynamic knowledge memory systems for AI applications and agents, combining vector search, graph databases, and cognitive science methods to transform raw data into interconnected knowledge graphs.

原文翻译: Cognee是一个开源的Python框架,旨在为AI应用和智能体构建持久化、动态的知识记忆系统,通过结合向量搜索、图数据库和认知科学方法,将原始数据转化为互连的知识图谱。

简介

Cognee is an open-source Python framework designed to build persistent, dynamic, and learnable knowledge memory systems for AI applications and agents. It transcends traditional Retrieval-Augmented Generation (RAG) systems by integrating vector search, graph databases, and cognitive science methodologies to transform raw data into interconnected knowledge graphs. Cognee employs a unique ECL (Extract, Cognify, Load) pipeline architecture, enabling not only semantic searchability of documents but also deep reasoning through entity relationships, thereby endowing AI systems with human-like long-term memory capabilities.

Cognee 是一个开源的 Python 框架,旨在为 AI 应用和智能体(Agents)构建持久化、动态且可学习的知识记忆系统。它超越了传统的 RAG(检索增强生成)系统,通过结合向量搜索、图数据库和认知科学方法,将原始数据转化为互连的知识图谱。Cognee 采用独特的 ECL(提取、认知化、加载)管道架构,不仅让文档可被语义搜索,还能通过实体关系进行深度推理,为 AI 系统提供类似人类的长期记忆能力。

核心特性

图向量混合存储

Graph-Vector Hybrid Storage: Seamlessly integrates graph databases (e.g., Neo4j, Kuzu) with vector databases (e.g., LanceDB, Weaviate), supporting dual retrieval based on both semantics and relationships.

图向量混合存储:无缝集成图数据库(如 Neo4j、Kuzu)与向量数据库(如 LanceDB、Weaviate),支持基于语义和基于关系的双重检索。

模块化 ECL 管道

Modular ECL Pipeline: Offers a highly customizable data processing workflow, including data extraction (supporting 30+ data sources), cognification (LLM-driven knowledge graph construction), and loading (memory solidification).

模块化 ECL 管道:提供高度可定制的数据处理流程,包括数据提取(支持 30+ 数据源)、认知化(LLM 驱动的知识图谱构建)和加载(记忆固化)。

多模态数据支持

Multimodal Data Support: Natively supports text, images, audio transcripts, and past conversation history to build a unified knowledge context.

多模态数据支持:原生支持文本、图像、音频转录以及过往对话历史,构建统一的知识上下文。

智能体记忆管理

Agent Memory Management: Provides cross-session persistent memory for agent frameworks like LangGraph and AutoGen, supporting memory updates, forgetting, and self-improvement based on feedback.

智能体记忆管理:为 LangGraph、AutoGen 等智能体框架提供跨会话的持久化记忆,支持记忆更新、遗忘和基于反馈的自我改进。

MCP 集成

MCP Integration: Offers a Cognee MCP server, allowing the knowledge engine to be integrated as a tool into Claude Desktop or other MCP-compatible clients.

MCP 集成:提供 Cognee MCP 服务器,可将知识引擎作为工具接入 Claude Desktop 或其他 MCP 兼容客户端。

本地优先与生产部署

Local-First & Production Deployment: Defaults to local filesystem storage, supports Docker containerized deployment, and can be configured with production-grade backends like PostgreSQL and S3.

本地优先与生产部署:默认使用本地文件系统存储,支持 Docker 容器化部署,并可配置 PostgreSQL、S3 等生产级后端。

安装与配置

环境要求

Prerequisites:

- Python 3.10 to 3.13

环境要求:

- Python 3.10 至 3.13

安装

Installation

It is recommended to use the uv package manager for quick installation:

推荐使用

uv包管理器进行快速安装:

uv pip install cognee

Or use pip:

或使用 pip:

pip install cognee

To install with support for specific backends (e.g., Weaviate), use extensions:

如需特定后端支持(如 Weaviate),可安装扩展:

pip install "cognee[weaviate]"

配置

Configuration

Cognee is primarily configured via environment variables. Create a .env file or set the variables directly:

Cognee 主要通过环境变量配置。创建一个

.env文件或直接设置环境变量:

- LLM Configuration: Set

LLM_API_KEY(e.g., your OpenAI API Key) andLLM_PROVIDER(defaults toopenai).LLM 配置:设置

LLM_API_KEY(如 OpenAI API Key)和LLM_PROVIDER(默认为 openai)。 - Database Configuration: Set

GRAPH_DATABASE_PROVIDER(kuzu,neo4j),VECTOR_DB_PROVIDER(lancedb,pgvector), and the corresponding connection URLs.数据库配置:设置

GRAPH_DATABASE_PROVIDER(kuzu, neo4j)、VECTOR_DB_PROVIDER(lancedb, pgvector)及对应的连接 URL。 - Storage Configuration: Set

STORAGE_PROVIDER(local,s3), etc.存储配置:设置

STORAGE_PROVIDER(local, s3)等。

核心工作流程

Cognee provides both a Python API and a CLI for interaction. The core workflow revolves around Add (ingesting data), Cognify (building knowledge), and Search (retrieving memory).

Cognee 提供 Python API 和 CLI 两种使用方式。核心流程围绕 Add(添加数据)、Cognify(构建知识)和 Search(检索记忆)展开。

- 初始化与添加数据 | Initialization & Adding Data: Import the

cogneelibrary and useawait cognee.add(data, dataset_name)to add text, file paths, or URLs to the system.导入

cognee库,使用await cognee.add(data, dataset_name)将文本、文件路径或 URL 添加到系统中。 - 构建知识图谱 | Building the Knowledge Graph: Call

await cognee.cognify(dataset_name). The system will use an LLM to extract entities and relationships, constructing the graph structure.调用

await cognee.cognify(dataset_name),系统将使用 LLM 提取实体、关系并构建图结构。 - 查询与检索 | Querying & Retrieval: Use

await cognee.search(query_text)for hybrid search, or useawait cognee.get_graph()to obtain the raw graph for traversal.使用

await cognee.search(query_text)进行混合搜索,或使用await cognee.get_graph()获取原始图谱进行图遍历。 - CLI 操作 | CLI Operations: Quickly operate via the terminal using commands like

cognee-cli add "text",cognee-cli cognify,cognee-cli search "query".可通过

cognee-cli add "text"、cognee-cli cognify、cognee-cli search "query"在终端快速操作。

应用场景与实例分析

实例 1:企业级智能客服知识库

Example 1: Enterprise-Grade Intelligent Customer Service Knowledge Base

场景 | Scenario: A financial company needs to build a customer service system that understands the interrelationships between product terms. A traditional RAG system can only answer "What is an annuity?" but cannot explain "the difference between annuities and life insurance in estate planning."

场景:某金融公司需要构建一个能理解产品条款关联性的客服系统。传统 RAG 只能回答“什么是年金险”,但无法解释“年金险与寿险在遗产规划中的区别”。

Cognee 方案 | Cognee Solution: Input product manuals and regulatory documents into Cognee. When a user asks a complex, relational question, the system not only retrieves relevant passages but also traces paths in the knowledge graph (e.g., Annuity -> Beneficiary -> Estate Tax -> Life Insurance) to generate answers with deep reasoning, significantly reducing hallucinations.

Cognee 方案:将产品手册、法规文件录入 Cognee。当用户询问复杂关联问题时,系统不仅检索相关段落,还通过知识图谱追溯“年金险”->“受益人”->“遗产税”->“寿险”的路径,生成具有深度推理的答案,显著降低幻觉。

实例 2:科研文献智能助手

Example 2: Intelligent Research Literature Assistant

场景 | Scenario: Researchers need to track the latest developments in a specific field (e.g., "mRNA vaccines"), but new papers are published constantly, making it difficult to manually establish connections.

场景:研究人员需要跟踪某个领域(如“mRNA 疫苗”)的最新进展,但新论文层出不穷,难以手动建立联系。

Cognee 方案 | Cognee Solution: Regularly crawl paper abstracts from arXiv or PubMed and import them into Cognee. The system automatically constructs a graph with nodes like "Research Institution," "Author," "Target," and "Technical Method." When a researcher queries "application of CRISPR in vaccines," the system can list all relevant papers and visually display the technological evolution path.

Cognee 方案:定期爬取 arXiv 或 PubMed 的论文摘要并导入 Cognee。系统自动构建以“研究机构”、“作者”、“靶点”、“技术方法”为节点的图谱。研究员查询“CRISPR 在疫苗中的应用”时,系统能列出所有相关论文,并可视化展示技术演进路径。

实例 3:长对话 AI 伴侣

Example 3: Long-Conversation AI Companion

场景 | Scenario: Develop an AI companion with long-term memory that can remember user-mentioned details like "allergic to mangoes" and "likes sci-fi movies" from three months ago.

场景:开发一个具有长期记忆的 AI 伴侣,能记住用户三个月前提到的“对芒果过敏”和“喜欢科幻电影”。

Cognee 方案 | Cognee Solution: Add each round of conversation as a data source and set user attributes (e.g., "Allergens," "Preferences"). When the user says "recommend a movie," Cognee retrieves the memory graph, filters out movies with mango-related scenes, and prioritizes sci-fi recommendations, achieving truly personalized interaction.

Cognee 方案:将每轮对话作为数据源添加,并设置用户属性(如“过敏源”、“偏好”)。当用户说“推荐一部电影”时,Cognee 检索记忆图谱,过滤掉含有芒果场景的电影,并优先推荐科幻类,实现真正的个性化交互。

项目资源

项目仓库 | Project Repository: https://github.com/topoteretes/cognee

Cognee represents a significant step forward in equipping AI systems with sophisticated, structured, and actionable memory. By moving beyond flat document retrieval to a dynamic knowledge graph paradigm, it opens new possibilities for creating more intelligent, context-aware, and reliable AI applications across various domains.

Cognee 代表了在赋予 AI 系统复杂、结构化且可操作的记忆能力方面迈出的重要一步。通过从扁平化的文档检索转向动态的知识图谱范式,它为在各个领域创建更智能、更具上下文感知能力和更可靠的 AI 应用开辟了新的可能性。

常见问题(FAQ)

Cognee如何优化知识系统的地理数据存储与检索?

Cognee通过图向量混合存储无缝集成图数据库与向量数据库,支持基于语义和关系的双重检索,能高效处理地理实体间的空间关系与属性信息。

Cognee的ECL管道如何提升地理知识图谱的构建效率?

模块化ECL管道提供高度可定制流程,支持30+数据源提取、LLM驱动的知识图谱认知化及记忆固化,可自动化处理多源地理数据并建立关联。

Cognee如何为地理智能体提供跨会话的持久化记忆?

通过智能体记忆管理功能,为LangGraph等框架提供跨会话持久化记忆,支持地理知识的更新、遗忘和基于反馈的自我改进,确保记忆连续性。

版权与免责声明:本文仅用于信息分享与交流,不构成任何形式的法律、投资、医疗或其他专业建议,也不构成对任何结果的承诺或保证。

文中提及的商标、品牌、Logo、产品名称及相关图片/素材,其权利归各自合法权利人所有。本站内容可能基于公开资料整理,亦可能使用 AI 辅助生成或润色;我们尽力确保准确与合规,但不保证完整性、时效性与适用性,请读者自行甄别并以官方信息为准。

若本文内容或素材涉嫌侵权、隐私不当或存在错误,请相关权利人/当事人联系本站,我们将及时核实并采取删除、修正或下架等处理措施。 也请勿在评论或联系信息中提交身份证号、手机号、住址等个人敏感信息。