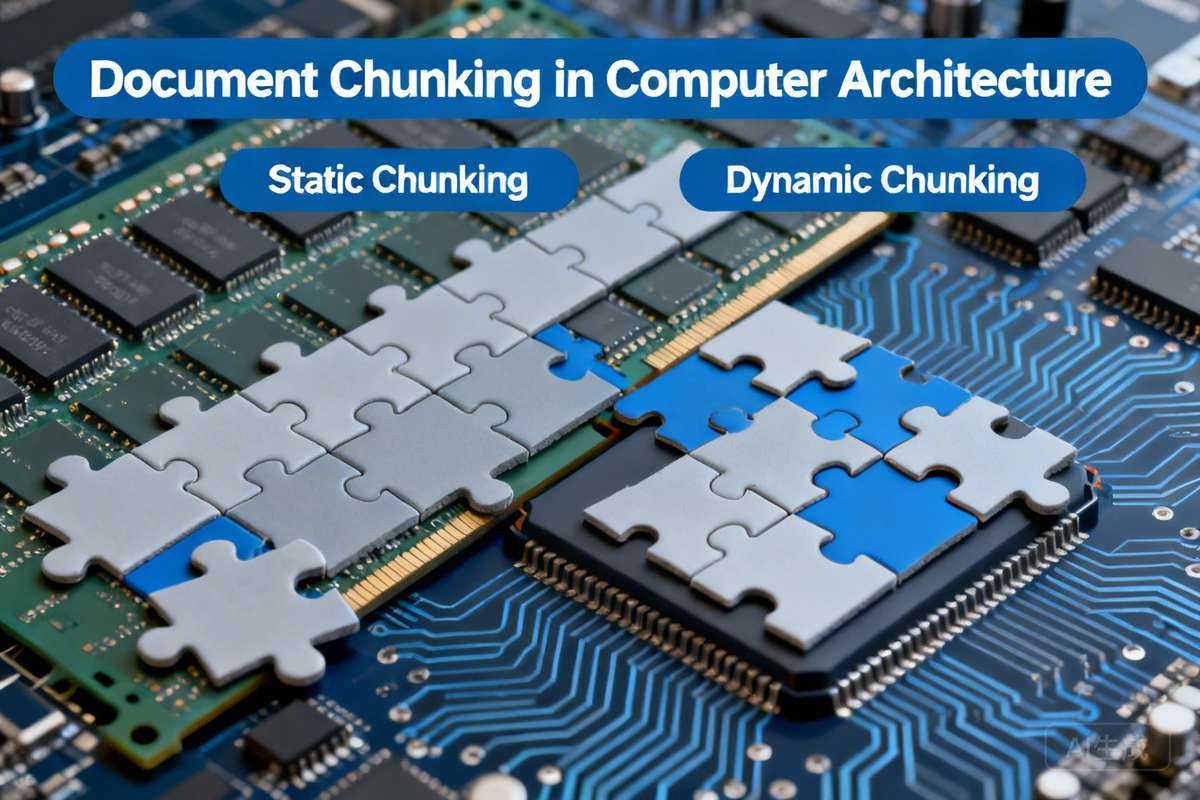

Despite increasing LLM context lengths, document chunking remains crucial for reducing latency in RAG applications. This article explores static and dynamic chunking techniques, including traditional IR-based, neural IR with embeddings, and ColBERT approaches, emphasizing that the optimal method depends on the specific application requirements.

原文翻译:

尽管LLM上下文长度不断增加,文档分块对于减少RAG应用中的延迟仍然至关重要。本文探讨了静态和动态分块技术,包括基于传统IR、神经IR嵌入和ColBERT的方法,强调最佳方法取决于具体的应用需求。Despite increasing LLM context lengths, document chunking remains crucial for reducing latency in RAG applications. This article explores static and dynamic chunking techniques, including traditional IR-based, neural IR with embeddings, and ColBERT approaches, emphasizing that the optimal method depends on the specific application requirements.

原文翻译:

尽管LLM上下文长度不断增加,文档分块对于减少RAG应用中的延迟仍然至关重要。本文探讨了静态和动态分块技术,包括基于传统IR、神经IR嵌入和ColBERT的方法,强调最佳方法取决于具体的应用需求。